POL 138 Fall 2025

Introduction

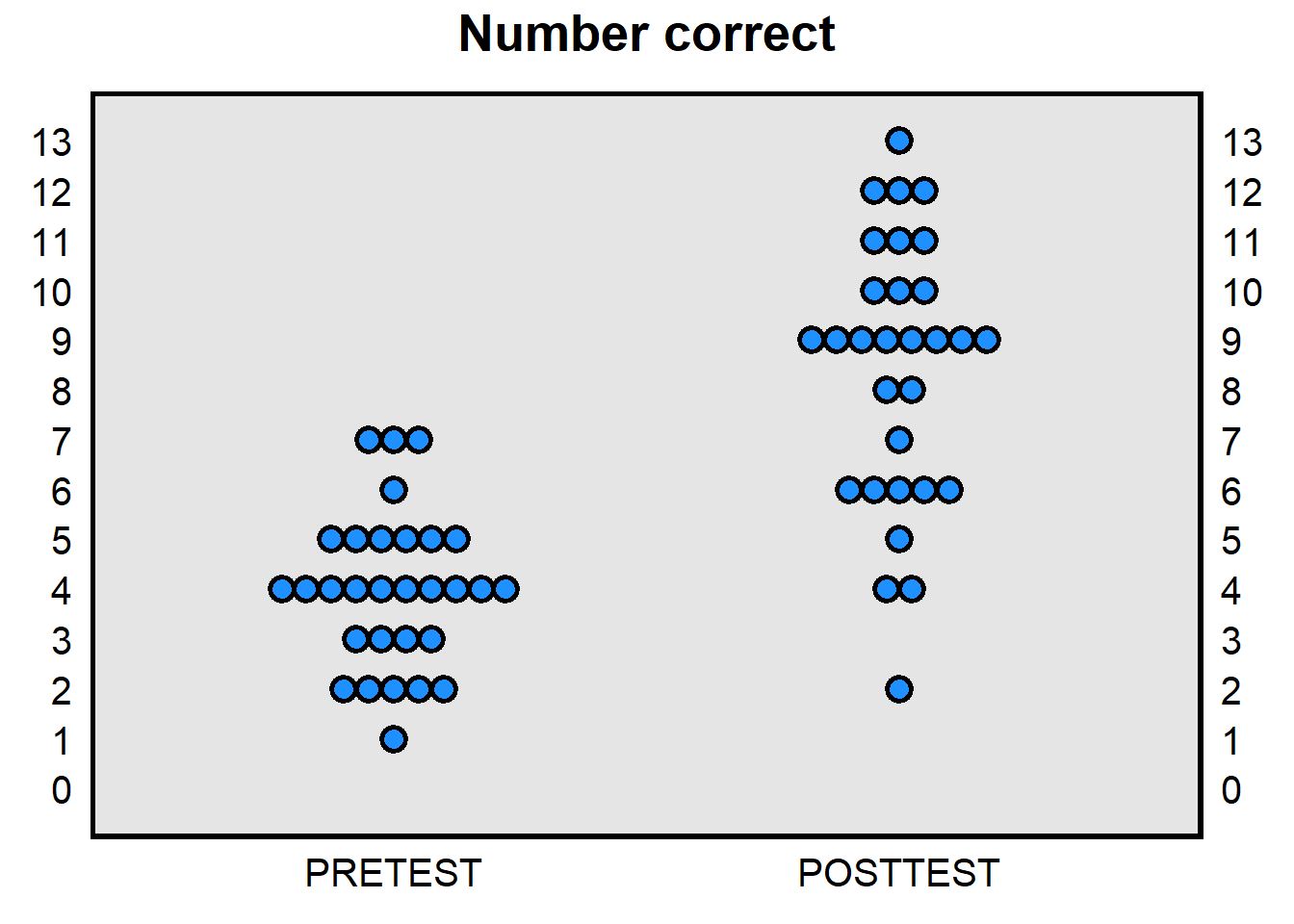

Data above are from 30 students in a prior POL 138 course. Each dot in the left PRETEST column represents, for each of the 30 students, the number of items correct for that student on a 13-item multiple-choice test about POL 138 content that the students took at the start of the semester: the average number correct on this pretest was 4.0. This pretest was not returned to the students until the end of the semester.

Each dot in the right POSTTEST column represents, for each of the 30 students, the number of items correct for that student on the same 13-item multiple-choice test about POL 138 content that the students took at the end of the semester: the average number correct on this posttest was 8.4. So, across the 30 students in the class, the average number correct was 4.4 items higher on the posttest than on the pretest.

This 4.4-item increase might only be because, on average, students were luckier guessers on the posttest, compared to how lucky the students were guessing on the pretest. But it’s also possible that, on average, students learned something about POL 138 content. Can we tell the difference?: In particular, are these data sufficient to conclude, at a reasonable level of certainty, that – at least on average – these students learned something about POL 138 content?

Answer

This is enough evidence, at a reasonable level of certainty. The logic of the statistical analysis is as follows. The observed difference in scores is 4.4 items on average. First, we combine the 30 pretest scores and the 30 posttest scores into one group of 60 scores. Then we randomly assign 30 of these scores to one group and 30 of these scores to another group. Then we calculate the difference in average scores between these two random groups. We do this random simulation over and over again. This randomization represents what would happen if students truly did not learn or forget anything about the test content, in the sense that – in our simulation – any difference between the pretest scores and the posttest score is due to random chance.

Next, we calculate the percentage of the simulations in which the difference in average scores between the two random groups was at least 4.4 items. The smaller this percentage, the more likely it is that the observed difference in average scores of 4.4 items was not due to random guessing. For the data in the plot above, the random groups differed in the simulations by at least 4.4 items only 0.00000007682 percent of the time. That means that the difference of 4.4 units that we observed between the pretest and the posttest was very unlikely to have been caused by students being luckier guessers on the posttest than on the pretest.Regarding the pretest, the plan is to give students in this POL 138 course a test at the beginning of the semester as a pretest, to then give students in this POL 138 course the same test as a posttest at the end of the semester, to then compare the number of items correct on the pretest to the number of items correct on the posttest, to then estimate the amount of learning (or unlearning), and to then conduct a statistical analysis to assess the amount of evidence that, on average, students learned (or unlearned) something about POL 138 content during the semester.

Let’s discuss some potential research design decisions for this pretest/posttest analysis:

Should I review the pretest items with you right now, tell you the correct responses, and explain why those responses are correct?:

- Yes

- No

Answer

I am interested in how much you learn about about all of our POL 138 content, not just the POL 138 content that is on the pretest. So, if we pay special attention to the POL 138 content on the pretest, then the results for the pretest/posttest analysis will plausibly not be representative of how much you learn about about all of our POL 138 content.In this course, we will have five exams: Exam 1, Exam 2, Exam 3, Exam 4, and – during finals week – the Final Exam. Of the following options, which would produce a more accurate estimate of the amount of learning among POL 138 students during the semester? Assume that I do not permit students to make up the pretest or the posttest.

- Students take the posttest at the start of the Exam 4 class meeting, before taking Exam 4.

- Students take the posttest at the start of our last regular class meeting, the week before the final exam.

- I put the posttest items on the final exam, count these items toward your final exam score, and use responses to those items as each student’s posttest.

Answer

Of the options, (A) is probably best. An important element of a accurate pretest/posttest comparison is to keep everything else equal except for the time of the semester in which students take the pretest and posttest. So, for example, when you took the pretest, you did not get more points toward your final grade if you got more items correct; we want to keep that aspect of the pretest for the posttest, because, if I put the posttest items on the final exam and students did better on average on the final exam posttest items, then I could not know with any certainty whether this higher average score is because you learned during the semester or merely because you tried harder for the posttest items because the posttest items on the final exam would affect the points toward your final grade.

Another important element of an accurate pretest/posttest comparison is for all students to take the pretest and the posttest. That’s why option (B) isn’t ideal, because – of the three options – students are more likely to not attend the final class meeting than to not attend Exam 4 or to not attend the Final Exam. Suppose that I give the posttest at the final class meeting before the final exam, and only 70% of students come to that class meeting. If the students who come to that class meeting are on average better students than the students who do not come to that class meeting, then the posttest score would be higher merely because of the students who took the posttest are not representative of the students in the course.Suppose that, right now, I gave each of you another pretest that was exactly as difficult on average as the pretest that you just took. Let’s assume that no student has yet learned or unlearned anything about POL 138 content, and let’s assume that each student tries exactly as hard on the second pretest as the student tried on the first pretest. Which of the following would you predict about the performance on this second pretest, among the ten students who had the highest score on the first pretest?

Compared to the percentage correct on the first pretest, these students would likely…

- …get a lower percentage correct on the second pretest

- …get the same percentage correct on the second pretest

- …get a higher percentage correct on the second pretest

Answer

Option (A): The ten students who had the highest score on the first pretest would likely get a lower percentage correct on the second pretest.

Your percentage correct on the multiple-choice pretest depended on how many items you knew the correct response to and how lucky you were guessing. The highest scores for the first pretest are a combination of students who knew a lot of the correct responses and students who were lucky guessers for the first pretest. But lucky guessing is random, and the expectation is that students who had good luck guessing on the first pretest will tend to have only average luck guessing on the second pretest, so that the ten students who had the highest score on the first pretest would likely get a lower percentage correct on the second pretest.

This phenomenon is called regression toward the mean and applies to anything in which there is a random component. The idea is that extreme observations from a random process are expected to be followed by less extreme observations from that random process.Suppose that, right now, I gave each of you another pretest that was exactly as difficult on average as the pretest that you just took. Let’s assume that no student has yet learned or unlearned anything about POL 138 content. Which of the following would you predict about the performance on this second pretest, among the ten students who had the lowest score on the first pretest?

Compared to the percentage correct on the first pretest, these students would likely…

- …get a lower percentage correct on the second pretest

- …get the same percentage correct on the second pretest

- …get a higher percentage correct on the second pretest

Answer

Option (C): The ten students who had the lowest score on the first pretest are expected to get a higher percentage correct on the second pretest, due to regression toward the mean.

Getting an extremely low score on a multiple-choice test is some combination of not knowing many correct responses and unlucky guessing, and the expectation is that these students will have close to average luck guessing on the second pretest.Suppose that some students do not take the pretest but do take the posttest. Suppose that, when the instructor estimates the learning between the pretest and the posttest, the instructor enters a zero for these students’ pretest scores, even though these students would probably not have gotten exactly zero correct on the pretest. That coding of zero would plausibly bias the estimate of the amount of learning in POL 138 to be…

- lower than the true amount of learning

- higher than the true amount of learning

Answer

- higher than the true amount of learning

Suppose that some students take the pretest but do not take the posttest. Suppose that, when the instructor estimates the learning between the pretest and the posttest, the instructor enters a zero for these students’ posttest scores, even though these students would probably not have gotten exactly zero correct on the posttest. That coding of zero will plausibly bias the estimate of the amount of learning in POL 138 to be…

- lower than the true amount of learning

- higher than the true amount of learning

Answer

- lower than the true amount of learning

Teaching strategies for this course:

Testing effect. Testing students on materials help students better learn the material through practice recalling and applying the material.

Distributive practice. Students learn better if learning is spaced over time than from the same amount of time but crammed.

Interleaving. Students learn more if practicing a mixture of item types than practicing only one item type.

Dual coding text and visual. Students learn more when information is consumed in verbal form and visual form, compared to only one or the other form.

Deep questioning. Students learn more if they practice higher-order thinking about the material.

Summaries of research on learning and study habits

Practical applications of learning science: A handbook for naval instructors, Version 1.0, November 2021

Harold P. Pashler, Patrice M. Bain, Brian A. Bottge, Arthur Graesser, Kenneth Koedinger, Mark McDaniel, and Janet Metcalfe. 2007. Organizing instruction and study to improve student learning: A practice guide. National Center for Education Research. NCER 2007-2004.

John Dunlosky. 2013. Strengthening the student toolbox: Study strategies to boost learning. American Educator 37: 12-21.

Selected research on laptop use in college courses

We present findings from a study that prohibited computer devices in randomly selected classrooms of an introductory economics course at the United States Military Academy. Average final exam scores among students assigned to classrooms that allowed computers were 0.18 standard deviations lower than exam scores of students in classrooms that prohibited computers. Through the use of two separate treatment arms, we uncover evidence that this negative effect occurs in classrooms where laptops and tablets are permitted without restriction and in classrooms where students are only permitted to use tablets that must remain flat on the desk.

Computers and productivity: Evidence from laptop use in the college classroom

This paper evaluates the effect of classroom computer use on academic performance. Using a quasi- experimental design and administrative data, we find that computer use in college classrooms has a negative impact on course grades. Our study exploits institutional policies that generate plausibly random variation in laptop use within the classroom. Compared to students who are not affected by computer policies, students who are induced to use computers in class perform significantly worse and students who are influenced not to use computers perform significantly better. We find that the negative effects of computer use are concentrated among males and low-performing students and more prominent in quantitative courses.

Laptop multitasking hinders classroom learning for both users and nearby peers

Laptops are commonplace in university classrooms. In light of cognitive psychology theory on costs associated with multitasking, we examined the effects of in-class laptop use on student learning in a simulated classroom. We found that participants who multitasked on a laptop during a lecture scored lower on a test compared to those who did not multitask, and participants who were in direct view of a multitasking peer scored lower on a test compared to those who were not. The results demonstrate that multitasking on a laptop poses a significant distraction to both users and fellow students and can be detrimental to comprehension of lecture content.

Logged in and zoned out: How laptop internet use relates to classroom learning

Laptop computers are widely prevalent in university classrooms. Although laptops are a valuable tool, they offer access to a distracting temptation: the Internet. In the study reported here, we assessed the relationship between classroom performance and actual Internet usage for academic and nonacademic purposes. Students who were enrolled in an introductory psychology course logged into a proxy server that monitored their online activity during class. Past research relied on self-report, but the current methodology objectively measured time, frequency, and browsing history of participants’ Internet usage. In addition, we assessed whether intelligence, motivation, and interest in course material could account for the relationship between Internet use and performance. Our results showed that nonacademic Internet use was common among students who brought laptops to class and was inversely related to class performance. This relationship was upheld after we accounted for motivation, interest, and intelligence. Class-related Internet use was not associated with a benefit to classroom performance.

As technologies have become more portable, scholars have turned their attention to whether the use of electronic devices during lecture positively or negatively affects student performance in the class. In this study, I investigate the effects of banning laptops in the classroom through an experiment conducted over two semesters in an introductory American politics course at a large, public four-year university. Overall, I find that banning laptops is more likely to hinder student performance in the class than help. Although students find many elements of the course to be more helpful to their learning in the laptop-free sections, this does not translate to greater student achievement or lead to significantly different evaluations on the official university teaching evaluations. Overall, these findings suggest that although instructors are not penalized for banning laptops from their classrooms, they ought to carefully consider the extent to which such policies are helpful to student progress in large lecture classes.

From the study immediately above:

One section each term was randomly assigned to a “laptop ban” condition (LBC) in which students were prohibited from using laptops to take notes.

Randomization in a randomized experiment helps isolate effects. Does the randomization above – one section randomly selected for a laptop ban – sufficiently help isolate the effect of the laptop ban?